Retrievers play a crucial role in the LangChain framework by providing a flexible interface that returns documents based on unstructured queries. Unlike vector stores, retrievers are not required to store documents; their primary function is to retrieve relevant information. While vector stores can serve as the backbone of a retriever, various types of retrievers exist, each tailored to specific use cases.

Learning Objective

- Explore the pivotal role of retrievers in LangChain, enabling efficient and flexible document retrieval for diverse applications.

- Learn how LangChain’s retrievers, from vector stores to MultiQuery and Contextual Compression, streamline access to relevant information.

- This guide covers various retriever types in LangChain and illustrates how each is tailored to optimize query handling and data access.

- Dive into LangChain’s retriever functionality, examining tools for enhancing document retrieval precision and relevance.

- Understand how LangChain’s custom retrievers adapt to specific needs, empowering developers to create highly responsive applications.

- Discover LangChain’s retrieval techniques that integrate language models and vector databases for more accurate and efficient search results.

Retrievers in LangChain

Retrievers accept a string query as input and output a list of Document objects. This mechanism allows applications to fetch pertinent information efficiently, enabling advanced interactions with large datasets or knowledge bases.

1. Using a Vectorstore as a Retriever

A vector store retriever efficiently retrieves documents by leveraging vector representations. It serves as a lightweight wrapper around the vector store class, conforming to the retriever interface and utilizing methods like similarity search and Maximum Marginal Relevance (MMR).

To create a retriever from a vector store, use the .as_retriever method. For example, with a Pinecone vector store based on customer reviews, we can set it up as follows:

from langchain_community.document_loaders import CSVLoader

from langchain_community.vectorstores import Pinecone

from langchain_openai import OpenAIEmbeddings

from langchain_text_splitters import CharacterTextSplitter

loader = CSVLoader("customer_reviews.csv")

documents = loader.load()

text_splitter = CharacterTextSplitter(chunk_size=500, chunk_overlap=50)

texts = text_splitter.split_documents(documents)

embeddings = OpenAIEmbeddings()

vectorstore = Pinecone.from_documents(texts, embeddings)

retriever = vectorstore.as_retriever()We can now use this retriever to query relevant reviews:

docs = retriever.invoke("What do customers think about the battery life?")By default, the retriever uses similarity search, but we can specify MMR as the search type:

retriever = vectorstore.as_retriever(search_type="mmr")Additionally, we can pass parameters like a similarity score threshold or limit the number of results with top-k:

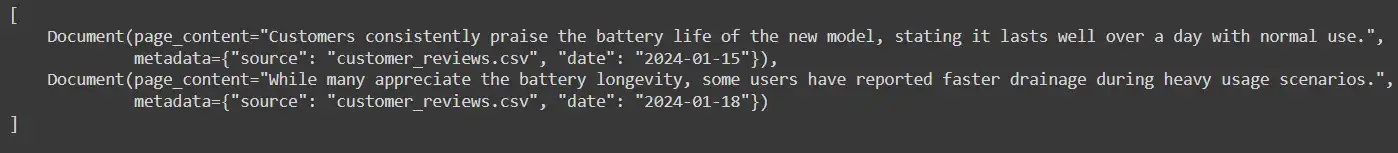

retriever = vectorstore.as_retriever(search_kwargs={"k": 2, "score_threshold": 0.6})Output:

Using a vector store as a retriever enhances document retrieval by ensuring efficient access to relevant information.

2. Using the MultiQueryRetriever

The MultiQueryRetriever enhances distance-based vector database retrieval by addressing common limitations, such as variations in query wording and suboptimal embeddings. Automating prompt tuning with a large language model (LLM) generates multiple queries from different perspectives for a given user input. This process allows for retrieving relevant documents for each query and combining the results to yield a richer set of potential documents.

Building a Sample Vector Database

To demonstrate the MultiQueryRetriever, let’s create a vector store using product descriptions from a CSV file:

from langchain_community.document_loaders import CSVLoader

from langchain_community.vectorstores import FAISS

from langchain_openai import OpenAIEmbeddings

from langchain_text_splitters import CharacterTextSplitter

# Load product descriptions

loader = CSVLoader("product_descriptions.csv")

data = loader.load()

# Split the text into chunks

text_splitter = CharacterTextSplitter(chunk_size=300, chunk_overlap=50)

documents = text_splitter.split_documents(data)

# Create the vector store

embeddings = OpenAIEmbeddings()

vectordb = FAISS.from_documents(documents, embeddings)Simple Usage

To utilize the MultiQueryRetriever, specify the LLM for query generation:

from langchain.retrievers.multi_query import MultiQueryRetriever

from langchain_openai import ChatOpenAI

question = "What features do customers value in smartphones?"

llm = ChatOpenAI(temperature=0)

retriever_from_llm = MultiQueryRetriever.from_llm(

retriever=vectordb.as_retriever(), llm=llm

)

unique_docs = retriever_from_llm.invoke(question)

len(unique_docs) # Number of unique documents retrievedOutput:

The MultiQueryRetriever generates multiple queries, enhancing the diversity and relevance of the retrieved documents.

Customizing Your Prompt

To tailor the generated queries, you can create a custom PromptTemplate and an output parser:

from langchain_core.output_parsers import BaseOutputParser

from langchain_core.prompts import PromptTemplate

from typing import List

# Custom output parser

class LineListOutputParser(BaseOutputParser[List[str]]):

def parse(self, text: str) -> List[str]:

return list(filter(None, text.strip().split("\n")))

output_parser = LineListOutputParser()

# Custom prompt for query generation

QUERY_PROMPT = PromptTemplate(

input_variables=["question"],

template="""Generate five different versions of the question: {question}"""

)

llm_chain = QUERY_PROMPT | llm | output_parser

# Initialize the retriever

retriever = MultiQueryRetriever(

retriever=vectordb.as_retriever(), llm_chain=llm_chain, parser_key="lines"

)

unique_docs = retriever.invoke("What features do customers value in smartphones?")

len(unique_docs) # Number of unique documents retrievedOutput

Using the MultiQueryRetriever allows for more effective retrieval processes, ensuring diverse and comprehensive results based on user queries

3. How to Perform Retrieval with Contextual Compression

Retrieving relevant information from large document collections can be challenging, especially when the specific queries users will pose are unknown at the time of data ingestion. Often, valuable insights are buried in lengthy documents, leading to inefficient and costly calls to language models (LLMs) while providing less-than-ideal responses. Contextual compression addresses this issue by refining the retrieval process, ensuring that only pertinent information is returned based on the user’s query.

Overview of Contextual Compression

The Contextual Compression Retriever operates by integrating a base retriever with a Document Compressor. Instead of returning documents in their entirety, this approach compresses them according to the context provided by the query. This compression involves both reducing the content of individual documents and filtering out irrelevant ones.

Implementation Steps

1. Initialize the Base Retriever: Begin by setting up a vanilla vector store retriever. For example, consider a news article on climate change policy:

from langchain_community.document_loaders import TextLoader

from langchain_community.vectorstores import FAISS

from langchain_openai import OpenAIEmbeddings

from langchain_text_splitters import CharacterTextSplitter

# Load and split the article

documents = TextLoader("climate_change_policy.txt").load()

text_splitter = CharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

texts = text_splitter.split_documents(documents)

# Initialize the vector store retriever

retriever = FAISS.from_documents(texts, OpenAIEmbeddings()).as_retriever()2. Perform an Initial Query: Execute a query to see the results returned by the base retriever, which may include relevant as well as irrelevant information.

docs = retriever.invoke("What actions are being proposed to combat climate change?")3. Enhance Retrieval with Contextual Compression: Wrap the base retriever with a ContextualCompressionRetriever, utilizing an LLMChainExtractor to extract relevant content:

from langchain.retrievers import ContextualCompressionRetriever

from langchain.retrievers.document_compressors import LLMChainExtractor

from langchain_openai import OpenAI

llm = OpenAI(temperature=0)

compressor = LLMChainExtractor.from_llm(llm)

compression_retriever = ContextualCompressionRetriever(

base_compressor=compressor, base_retriever=retriever

)

# Perform the compressed retrieval

compressed_docs = compression_retriever.invoke("What actions are being proposed to combat climate change?")Review the Compressed Results: The ContextualCompressionRetriever processes the initial documents and extracts only the relevant information related to the query, optimizing the response.

Creating a Custom Retriever

A retriever is essential in many LLM applications. It is tasked with fetching relevant documents based on user queries. These documents are formatted into prompts for the LLM, enabling it to generate appropriate responses.

Interface

To create a custom retriever, extend the BaseRetriever class and implement the following methods:

| Method | Description | Required/Optional |

| _get_relevant_documents | Retrieve documents relevant to a query. | Required |

| _aget_relevant_documents | Asynchronous implementation for native support. | Optional |

Inheriting from BaseRetriever grants your retriever the standard Runnable functionality.

Example

Here’s an example of a simple retriever:

from typing import List

from langchain_core.documents import Document

from langchain_core.retrievers import BaseRetriever

class ToyRetriever(BaseRetriever):

"""A simple retriever that returns top k documents containing the user query."""

documents: List[Document]

k: int

def _get_relevant_documents(self, query: str) -> List[Document]:

matching_documents = [doc for doc in self.documents if query.lower() in doc.page_content.lower()]

return matching_documents[:self.k]

# Example usage

documents = [

Document("Dogs are great companions.", {"type": "dog"}),

Document("Cats are independent pets.", {"type": "cat"}),

]

retriever = ToyRetriever(documents=documents, k=1)

result = retriever.invoke("dog")

print(result[0].page_content)Output

This implementation provides a straightforward way to retrieve documents based on user input, illustrating the core functionality of a custom retriever in LangChain.

Conclusion

In the LangChain framework, retrievers are powerful tools that enable efficient access to relevant information across various document types and use cases. By understanding and implementing different retriever types—such as vector store retrievers, the MultiQueryRetriever, and the Contextual Compression Retriever—developers can tailor document retrieval to their application’s specific needs.

Each retriever type offers unique advantages, from handling complex queries with MultiQueryRetriever to optimizing responses with Contextual Compression. Additionally, creating custom retrievers allows for even greater flexibility, accommodating specialized requirements that built-in options may not meet. Mastering these retrieval techniques empowers developers to build more effective and responsive applications, harnessing the full potential of language models and large datasets.

If you’re looking to master LangChain and other Generative AI concepts, don’t miss out on our GenAI Pinnacle Program.

Frequently Asked Questions

Ans. A retriever’s primary role is to fetch relevant documents in response to a query. This helps applications efficiently access necessary information from large datasets without needing to store the documents themselves.

Ans. A vector store is used for storing documents in a way that allows similarity-based retrieval, while a retriever is an interface designed to retrieve documents based on queries. Although vector stores can be part of a retriever, the retriever’s job is focused on fetching relevant information.

Ans. The MultiQueryRetriever improves search results by creating multiple variations of a query using a language model. This method captures a broader range of documents that might be relevant to differently phrased questions, enhancing the diversity of retrieved information.

Ans. Contextual compression refines retrieval results by reducing document content to only the relevant sections and filtering out unrelated information. This is especially useful in large collections where full documents might contain extraneous details, saving resources and providing more focused responses.

Ans. To set up a MultiQueryRetriever, you need a vector store for document storage, a language model (LLM) to generate multiple query perspectives, and, optionally, a custom prompt template to refine query generation further.

Hi, I am Janvi, a passionate data science enthusiast currently working at Analytics Vidhya. My journey into the world of data began with a deep curiosity about how we can extract meaningful insights from complex datasets.

We use cookies essential for this site to function well. Please click to help us improve its usefulness with additional cookies. Learn about our use of cookies in our Privacy Policy & Cookies Policy.

Show details

Cookies

This site uses cookies to ensure that you get the best experience possible. To learn more about how we use cookies, please refer to our Privacy Policy & Cookies Policy.

brahmaid

It is needed for personalizing the website.

csrftoken

This cookie is used to prevent Cross-site request forgery (often abbreviated as CSRF) attacks of the website

Identityid

Preserves the login/logout state of users across the whole site.

sessionid

Preserves users’ states across page requests.

g_state

Google One-Tap login adds this g_state cookie to set the user status on how they interact with the One-Tap modal.

MUID

Used by Microsoft Clarity, to store and track visits across websites.

_clck

Used by Microsoft Clarity, Persists the Clarity User ID and preferences, unique to that site, on the browser. This ensures that behavior in subsequent visits to the same site will be attributed to the same user ID.

_clsk

Used by Microsoft Clarity, Connects multiple page views by a user into a single Clarity session recording.

SRM_I

Collects user data is specifically adapted to the user or device. The user can also be followed outside of the loaded website, creating a picture of the visitor’s behavior.

SM

Use to measure the use of the website for internal analytics

CLID

The cookie is set by embedded Microsoft Clarity scripts. The purpose of this cookie is for heatmap and session recording.

SRM_B

Collected user data is specifically adapted to the user or device. The user can also be followed outside of the loaded website, creating a picture of the visitor’s behavior.

_gid

This cookie is installed by Google Analytics. The cookie is used to store information of how visitors use a website and helps in creating an analytics report of how the website is doing. The data collected includes the number of visitors, the source where they have come from, and the pages visited in an anonymous form.

_ga_#

Used by Google Analytics, to store and count pageviews.

_gat_#

Used by Google Analytics to collect data on the number of times a user has visited the website as well as dates for the first and most recent visit.

collect

Used to send data to Google Analytics about the visitor’s device and behavior. Tracks the visitor across devices and marketing channels.

AEC

cookies ensure that requests within a browsing session are made by the user, and not by other sites.

G_ENABLED_IDPS

use the cookie when customers want to make a referral from their gmail contacts; it helps auth the gmail account.

test_cookie

This cookie is set by DoubleClick (which is owned by Google) to determine if the website visitor’s browser supports cookies.

_we_us

this is used to send push notification using webengage.

WebKlipperAuth

used by webenage to track auth of webenagage.

ln_or

Linkedin sets this cookie to registers statistical data on users’ behavior on the website for internal analytics.

JSESSIONID

Use to maintain an anonymous user session by the server.

li_rm

Used as part of the LinkedIn Remember Me feature and is set when a user clicks Remember Me on the device to make it easier for him or her to sign in to that device.

AnalyticsSyncHistory

Used to store information about the time a sync with the lms_analytics cookie took place for users in the Designated Countries.

lms_analytics

Used to store information about the time a sync with the AnalyticsSyncHistory cookie took place for users in the Designated Countries.

liap

Cookie used for Sign-in with Linkedin and/or to allow for the Linkedin follow feature.

visit

allow for the Linkedin follow feature.

li_at

often used to identify you, including your name, interests, and previous activity.

s_plt

Tracks the time that the previous page took to load

lang

Used to remember a user’s language setting to ensure LinkedIn.com displays in the language selected by the user in their settings

s_tp

Tracks percent of page viewed

AMCV_14215E3D5995C57C0A495C55%40AdobeOrg

Indicates the start of a session for Adobe Experience Cloud

s_pltp

Provides page name value (URL) for use by Adobe Analytics

s_tslv

Used to retain and fetch time since last visit in Adobe Analytics

li_theme

Remembers a user’s display preference/theme setting

li_theme_set

Remembers which users have updated their display / theme preferences

We do not use cookies of this type.

_gcl_au

Used by Google Adsense, to store and track conversions.

SID

Save certain preferences, for example the number of search results per page or activation of the SafeSearch Filter. Adjusts the ads that appear in Google Search.

SAPISID

Save certain preferences, for example the number of search results per page or activation of the SafeSearch Filter. Adjusts the ads that appear in Google Search.

__Secure-#

Save certain preferences, for example the number of search results per page or activation of the SafeSearch Filter. Adjusts the ads that appear in Google Search.

APISID

Save certain preferences, for example the number of search results per page or activation of the SafeSearch Filter. Adjusts the ads that appear in Google Search.

SSID

Save certain preferences, for example the number of search results per page or activation of the SafeSearch Filter. Adjusts the ads that appear in Google Search.

HSID

Save certain preferences, for example the number of search results per page or activation of the SafeSearch Filter. Adjusts the ads that appear in Google Search.

DV

These cookies are used for the purpose of targeted advertising.

NID

These cookies are used for the purpose of targeted advertising.

1P_JAR

These cookies are used to gather website statistics, and track conversion rates.

OTZ

Aggregate analysis of website visitors

_fbp

This cookie is set by Facebook to deliver advertisements when they are on Facebook or a digital platform powered by Facebook advertising after visiting this website.

fr

Contains a unique browser and user ID, used for targeted advertising.

bscookie

Used by LinkedIn to track the use of embedded services.

lidc

Used by LinkedIn for tracking the use of embedded services.

bcookie

Used by LinkedIn to track the use of embedded services.

aam_uuid

Use these cookies to assign a unique ID when users visit a website.

UserMatchHistory

These cookies are set by LinkedIn for advertising purposes, including: tracking visitors so that more relevant ads can be presented, allowing users to use the ‘Apply with LinkedIn’ or the ‘Sign-in with LinkedIn’ functions, collecting information about how visitors use the site, etc.

li_sugr

Used to make a probabilistic match of a user’s identity outside the Designated Countries

MR

Used to collect information for analytics purposes.

ANONCHK

Used to store session ID for a users session to ensure that clicks from adverts on the Bing search engine are verified for reporting purposes and for personalisation

We do not use cookies of this type.

Cookie declaration last updated on 24/03/2023 by Analytics Vidhya.

Cookies are small text files that can be used by websites to make a user’s experience more efficient. The law states that we can store cookies on your device if they are strictly necessary for the operation of this site. For all other types of cookies, we need your permission. This site uses different types of cookies. Some cookies are placed by third-party services that appear on our pages. Learn more about who we are, how you can contact us, and how we process personal data in our Privacy Policy.

GenAI

Pinnacle

Program

Revolutionizing AI Learning & Development

- 1:1 Mentorship with Generative AI experts

- Advanced Curriculum with 200+ Hours of Learning

- Master 26+ GenAI Tools and Libraries

Enter email address to continue

Resend OTP

Resend OTP in 45s